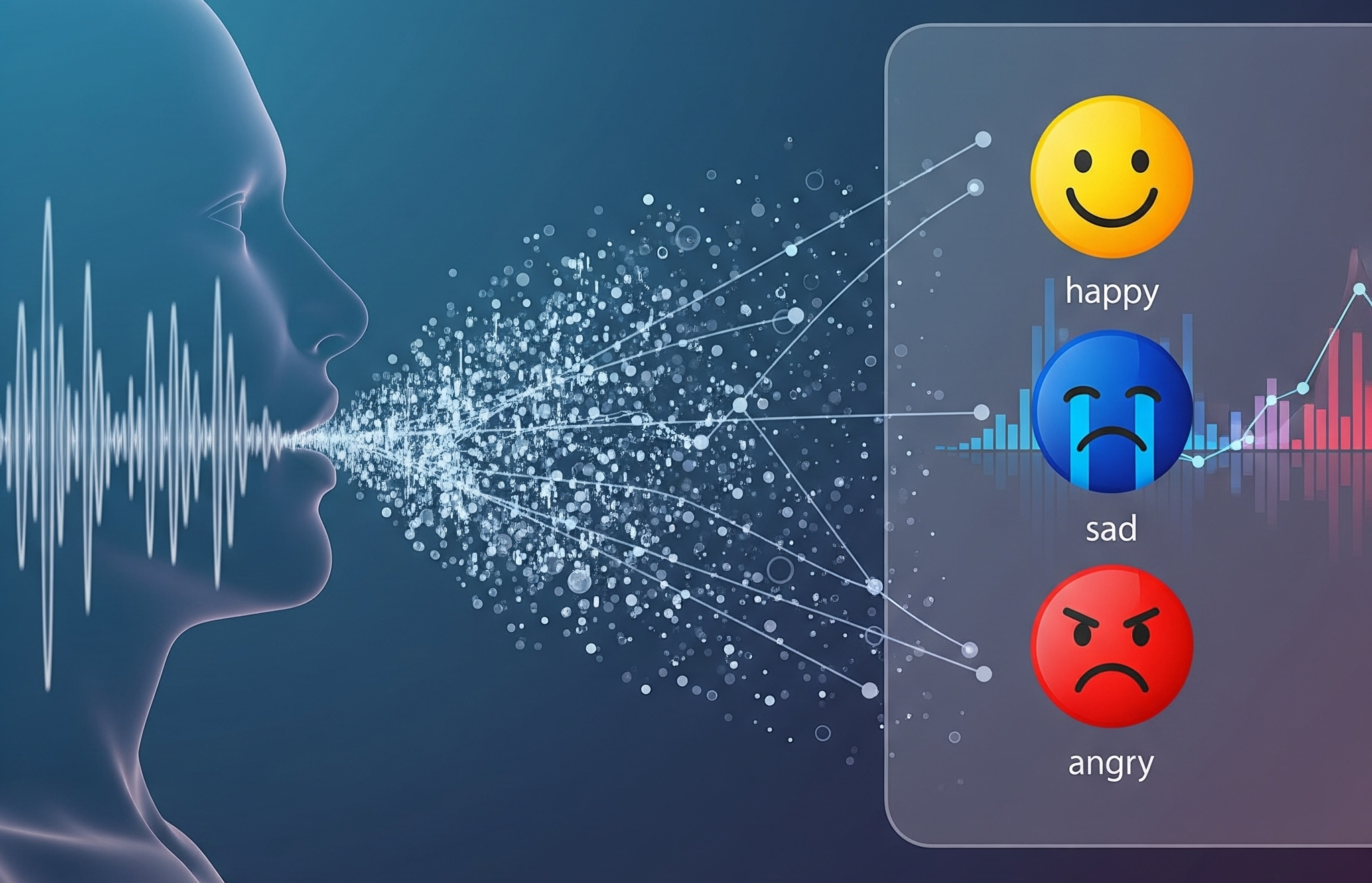

Audio: Speech Sentiment Analysis

Unlocking Emotional Intelligence from Audio with AI-Powered Speech Sentiment Detection

Project Overview

Speech sentiment analysis using AI/ML involves processing audio recordings to detect and interpret emotional tones, predicting sentiments such as neutral, angry, calm, happy, sad, fearful, disgust, and surprised. This involves using Natural Language Processing (NLP) for spoken content analysis and machine learning algorithms to classify these specific emotions, providing valuable insights for applications like customer service, market research, and social media monitoring.

Technologies Used

- Artificial Intelligence (AI)

- Machine Learning (ML)

- Natural Language Processing (NLP)

- Audio Signal Processing

- Python, TensorFlow, or PyTorch (list specific frameworks/tools)

Key Features

- Real-time sentiment detection

- Multiclass emotion recognition (neutral, happy, sad, etc.)

- Integration with customer service platforms

- Dashboard/visualization for sentiment analytics

Use Cases

- Enhancing customer support call quality

- Market and audience sentiment analysis

- Social media and brand monitoring

- Automated emotion tagging in audio archives

Project Outcomes

- Improved emotion tracking accuracy

- Enhanced reporting for stakeholders

- Better customer understanding and satisfaction

Challenges Overcome

- Accurate emotion detection in noisy environments

- Audio-to-text conversion for NLP

- Continuous model improvement

Client/Industry

- B2B SaaS, Call Centers, Research agencies, etc.

Our Role

- End-to-end development, model training, deployment, support, etc.